This section makes heavy use of differential calculus. It is also related to the material in these sections:

Building a power-series representation of a function

Taylor series are infinite series of a particular type. They are extremely important in practical and theoretical mathematics. Very often we are faced with using functions that aren't that easy to work with in practice, like exponential and logarithmic functions, or trigonometric functions, or tricky combinations of those. Life gets much simpler if we can replace them with something that's easier to work with.

The accuracy and precision of a Taylor series approximation of a function depend on how many terms we have in our series. In most cases we can get to arbitrary precision (as high as we want), but we balance that with the difficulty of deriving and calculating more terms. Taylor series aren't difficult to come up with, either – you'll see.

We'll start by reviewing linear approximations of functions. We'll learn how to form Taylor-series representations of functions beginning at some central point, and we'll discuss the errors involved in approximating functions in this way.

Linear approximations — a review

We showed that the linear approximation of a function $f$ near a point $x = a$ in its domain is

$$f(x) \approx f'(a)(x - a) + f(a)$$

That's really just $y = mx + b,$ the slope-intercept form of a line, but let's just review how it is derived from a function and its derivative. Look at the figure below. It shows a function, $f(x)$, and its linear approximation, $L(x)$ at the point $(a, f(a))$. Remember that any function, when we view it closely enough, looks approximately linear. We can approximate a curve in the region around a point, $a$, in its domain by a line that has the same slope as the curve at $x = a$, and is tangent to $f(x)$ there, too. Using the approximation, we can estimate values of the function in the domain around $x = a$.

Here's how we derive $L(x)$. Start with the linear equation $y = mx + b$, and plug in the slope and the y-intercept.

$$y = mx + b \phantom{000} \text{ at } \: (a, f(a))$$

Notice that $f'(a)$ is the slope of $f(a)$ at $x = a,$ and $f(a) - f'(a)·a$ is just $b = y - mx,$ the y-intercept. Substituting the quantities in blue into the linear equation $y = mx + b$ and rearranging, we get:

$$ \begin{align} L(x) &= f'(x)\cdot x + f(a) - f'(a)\cdot a \\[5pt] &= f(a) + f'(a)(x - a) \end{align}$$

There are two features of linear approximation that we will use in developing even better polynomial approximations of functions. They are

- The first derivative of the function and its approximation must be the same at $x = a$: $L(a) = f(a),$ and

- The value of the function and the value of the approximation must be the same at $x = a$, where $a$ is the point around which we build our approximation: $L'(a) = f'(a).$

In what follows, we'll further insist that all derivatives of the function and its approximation must be the same at $x = a.$ It's all in the green box below.

Linear approximations

A linear approximation of a function near some point in its domain is just the equation of the line tangent to that function at that point.

The linear approximation of a function $f(x)$ near $x = a$ (left), and for $x = 0$ (right) follows from the well-known formula of a line from its slope, m, and a point, $(x_o, \; y_o)$: $y - y_o = m(x - x_o).$

$$f(x) \approx f(a) + f'(x)(x - a)$$

centered at $x = a$

$$f(x) \approx f(0) + f'(x) \cdot x$$

centered at $x = 0$

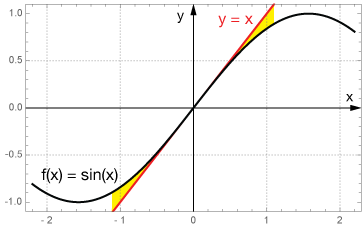

Example: Linear approximation of $f(x) = \sin(x)$ near $x = 0$

If we want to approximate $f(x) = \sin(x)$ with a line passing through $(0, f(0))$, we need a point and a slope. Our point is $(0, 0)$. The derivative of $\sin(x)$ at $x = 0$ will give us our slope: $f'(0) = \cos(0) = 1.$ So our approximation formula is

$$ \begin{align} y - y_o &= m(x - x_o) \\[5pt] y - 0 &= 1(x - 0) \\[5pt] y &= x \end{align}$$

That's a pretty simple approximation. It says that the value of $\sin(x)$ is approximately x for values of x near zero. That's pretty remarkable. If you can tolerate a small amount of error, this approximation involves no multiplication, the thing that really eats up computer time over many iterations. Direct calculation of sines using something like the Taylor-series representation we'll develop below, is much more time-consuming. Here is a graph of $\sin(x)$ vs. $x$, with $x$ in radians.

The table below compares this approximation with calculated values of the function for several values of $x$.

Table of approximations and exact values of sin(x)

(x in radians)

| $x$ (rad) | approx. | exact | error* |

|---|---|---|---|

| 0.001 | 0.001 | 0.0010 | 0% |

| 0.01 | 0.01 | 0.010 | 0.002% |

| 0.1 | 0.1 | 0.099 | 0.167% |

| 0.5 | 0.5 | 0.479 | 4.3% |

| 1.0 | 1.0 | 0.842 | 19% |

*error $= \frac{exact - approx}{exact} \times 100%$

You can see that while this approximation does ultimately break down, the farther we get from x = 0, but it is very good for values of x near zero. So if someone asks you, "Hey, what's the sine of 0.1?," you can confidently answer, "About 0.1."

Next we'll ask, what about approximating these curved functions with curved functions instead of lines? We'll see how that will lead to Taylor series.

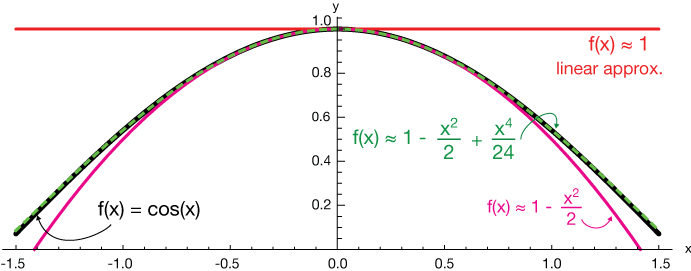

Approximating $f(x) = \cos(x)$

Can we do better than a linear approximation?

Take a look at the plot of $f(x) = \cos(x)$ below (thick black curve). Superimposed on it are the graphs of three successively better approximations, each centered around $x = 0.$ They are linear, quadratic and quartic approximations.

Notice that we haven't included any odd polynomial terms

The

The

Finally, the dashed green curve includes a quartic term, and clearly it matches $cos(x)$ quite well over the region shown, even relatively far from $x = 0.$

Don't worry about how we got those numerical coefficients just now. That will become clear in a bit.

How it's done

In the linear approximation, we made the assumptions that the value of the approximation and its slope should be the same at the point $x = a$. That just makes sense.

Well, we can generalize that kind of thinking to higher derivatives. It's also a reasonable goal to expect the curvature of a better approximation to match the curvature of the function we're trying to approximate.

If the curvature of $f(x)$ is concave-downward, then our approximation should be, too. And that means that both the function and the approximation should have the same second derivative.

We might also insist that the change in curvature (the third derivative) be the same ... and so on.

Requirements for a good polynomial approximation near x = a

In order for a polynomial function $P(x)$ to be a good approximation of a function $f(x)$,

- The value of the approximation must be the same as the value of the function at $x = a$: $P(a) = f(a)$

- The slope of the approximation must be the same as the slope of the function at $x = a$: $P'(a) = f'(a)$

- The curvature of the approximation must be the same as the curvature of the function at $x = a$: $P''(a) = f''(a)$

- ... and so on. Each successive derivative of the approximation and the function at $x$ = a must be equal: $P^{(n)}(a) = f^{(n)}(a)$

Example 1

Finding a series approximation of $f(x) = e^x$

Let's explore the derivation of a Taylor-series approximation of a function by making a polynomial approximation of the exponential function,

$$f(x) = e^x$$

Our approximation will take the form of a 5th degree polynomial with unknown coefficients. I've chosen five terms because it's enough to show that important patterns emerge, but there's nothing special about 5. It looks like this:

$$f(x) \approx g(x) = Ax^5 + Bx^4 + Cx^3 +Dx^2 + Ex + F$$

Now we said above that the function and all of its derivatives have to be the equal at the point in which we're interested. We'll center this approximation about $x=0$ for convenience. Here are the derivatives of the function, those derivatives evaluated at $x=0$, and the corresponding derivatives of our approximation, $g(x)$.

A note on derivative notation

We often write the first, second and third derivatives of a function $f(x)$ as $f'(x), \, f''(x)$ and $f'''(x),$ but writing all those primes gets tedious for higher derivatives, so we write $f^{(4)}(x)$ for the fourth derivative, $f^{(5)}(x)$ for the fifth, and so on.

$$ \begin{align} f(x) = e^x \phantom{00000} f(0) &= 1 \phantom{000} g(x) = Ax^5 + Bx^4 + Cx^3 + Dx^2 + Ex + F \\[5pt] f'(x) = e^x \phantom{0000} f'(0) &= 1 \phantom{000} g'(x) = 5 Ax^4 + 4Bx^3 + 3Cx^2 + 2Dx + E \\[5pt] f''(x) = e^x \phantom{0000} f''(0) &= 1 \phantom{000} g''(x) = 20 Ax^3 + 12 Bx^2 + 6Cx + 2D \\[5pt] f^{(3)}(x) = e^x \phantom{000} f^{(3)}(0) &= 1 \phantom{000} g^{(3)}(x) = 60 Ax^2 + 24 Bx + 6C \\[5pt] f^{(4)}(x) = e^x \phantom{000} f^{(4)}(0) &= 1 \phantom{000} g^{(4)}(x) = 120 Ax + 24B \\[5pt] f^{(5)}(x) = e^x \phantom{000} f^{(5)}(0) &= 1 \phantom{000} g^{(5)}(x) = 120 A \end{align}$$

Now if we equate $g(0) = f(0), \: g'(0) = f'(0),$ and so on, we can solve for the coefficients, $A, \; B, \; C, \; D$ & $E$, of our polynomial, $g(x).$

$$A = \frac{1}{120} \phantom{000} B = \frac{1}{24} \phantom{000} C = \frac{1}{6} \phantom{000} D = \frac{1}{2} \phantom{000} E = 1$$

The resulting polynomial, just a sum of these terms, looks like this:

$$f(x) \approx 1 + x + \frac{x^2}{2} + \frac{x^3}{6} + \frac{x^4}{24} + \frac{x^5}{120}$$

Now if we recognize some patterns, including that the factorial function is hidden in those denominators [recall that $n! = n(n-1)(n-2) \dots 3·2·1, \text{ and } 0! = 1$].

$$f(x) \approx \frac{x^0}{0!} + \frac{x^1}{1!} + \frac{x^2}{2!} + \frac{x^3}{3!} + \frac{x^4}{4!} + \frac{x^5}{5!} \dots $$

This pattern will continue indefinitely, and we can write the series approximation in shorthand like this:

$$e^x = \sum_{n = 0}^{\infty} \, \frac{x^n}{n!}$$

We can use this sum with $x = 1$ to estimate the value of $e = e^1$. Here's a table from a spreadsheet. Each row represents one more term added to the series. Notice that each successive term adds a smaller number onto the sum. This series is said to converge to a limit of $e^x$.

The table shows successive values of n, n! and 1/n! used in the series approximation of $e^x$. Notice that each new term is smaller than the previous one. That is, the size of the next term is decreasing.

As terms are added to our series approximation of $e^x$, terms nearest the decimal point begin to be fixed and no longer change (green). This is called convergence, and we say that the series is converging to the number $e$. If more precision is required, we just add more terms to the series.

For more on the transcendental number $e$, the base of all continuously-growing exponential functions, you might want to check out the exponential functions section.

Animation: Here is an animation of the first few terms of the series we just derived, centered at $x = 0$. This animation may not show up too well on a smaller device like a phone. I'm working on that, so stand by.

A general formula for Taylor series centered on x = 0

If we go back to our derivation of the series approximation of $f(x) = e^x$, we can see that a general formula for the series approximation of any differentiable function centered around $x = 0$ is:

$$f(x) \approx \sum_{n = 0}^{\infty} \frac{f^{(n)}(0)}{n!} x^n$$

Here $f^{(n)}(0)$ is the $n^{th}$ derivative of $f(x)$ evaluated at $x = 0$. In the special case where $x = 0$, the Taylor series is called a MacLaurin series.

The MacLaurin Series

The MacLaurin series is a Taylor series approximation of a function $f(x)$ centered at $x = 0$. $f^{(n)}(0)$ are the $n^{th}$ derivatives of $f(x)$ evaluated at $x=0$.

$$f(x) \approx \sum_{n = 0}^{\infty} \frac{f^{(n)}(0)}{n!} x^n$$

Taylor series not centered at x = 0

If we choose to center our approximation at some other point, $x = a$, in the domain of $f(x)$, then any value we calculate from the approximation will be at $(x - a)$, and we just evaluate the derivatives at $x = a$. The generalized Taylor series looks like this:

$$f(x) \approx \sum_{n = 0}^{\infty} \frac{f^{(n)}(a)}{n!} (x - a)^n$$

Notice that if $a = 0$, we just end up with the MacLaurin series formula.

The generalized Taylor series

$$f(x) \approx \sum_{n = 0}^{\infty} \frac{f^{(n)}(a)}{n!} (x - a)^n$$

Example 2

Derive the Taylor series approximation of $f(x) = \sin(x)$ near $x = 0$.

Because this approximation will be centered at $x = 0$, it's a MacLaurin series.

To stay organized, try making yourself a table of derivatives, derivatives evaluated at $x=0$, and terms of the series.

| $f^{(n)}(x)$ | $f^{(n)}(0)$ | $\frac{f^{(n)}(0)}{n!} x^n$ |

|---|---|---|

| $f(x) = \sin(x)$ | $f(0) = 0$ | $\require{cancel} \cancel{\frac{0}{0!} x^0}$ |

| $f'(x) = \cos(x)$ | $f'(0) = 1$ | $\frac{1}{1!} x^1$ |

| $f''(x) = -\sin(x)$ | $f''(0) = 0$ | $\cancel{\frac{0}{2!} x^2}$ |

| $f^{(3)}(x) = -\cos(x)$ | $f^{(3)} = -1$ | $\frac{-1}{3!} x^3$ |

| $f^{(4)}(x) = \sin(x)$ | $f^{(4)}(0) = 0$ | $\cancel{\frac{0}{4!} x^4}$ |

| $f^{(5)}(x) = \cos(x)$ | $f^{(5)}(0) = 1$ | $\frac{1}{5!} x^5$ |

Be on the lookout for patterns as you calculate the terms of the series. Looking at the right column of the table it's pretty obvious that terms with even powers of x drop out because the derivative is zero. It also appears that the sign of each term alternates between positive and negative.

If we write the remaining terms out, we can speculate (intelligently) on further terms, such as the last term (

$$sin(x) \approx \frac{x^1}{1!} - \frac{x^3}{3!} + \frac{x^5}{5!} - \frac{x^7}{7!} + \dots $$

Finally, we should try to capture that series of terms in compact summation notation. We try always to start the index, $n$, at zero, but sometimes it just isn't convenient. It works fine here, though. The alternating sign is represented by $(-1)^n$, giving us $1$ for $n = 0$, $-1$ for $n = 1$, and so on. The exponent and factorial terms are $2n+1$ and $(2n+1)!$ to ensure only odd numbers.

$$sin(x) \approx \sum_{n = 0}^{\infty} (-1)^n \frac{x^{2n + 1}}{(2n + 1)!}$$

Example 3

Find the Taylor series approximation of $f(x) = ln(x)$ near $x = 1$

Notice that this is a general Taylor series, not a MacLaurin series.

First, organize and set up a table of $f^{(n)}(x)$, $f^{(n)}(1)$ and the terms of the series (right).

Notice that in this example we've centered the approximation on $x = 1$ because $ln(x)$ is not defined at $x=0$.

You might need to take a minute to work out those derivatives for yourself, but they're correct. The first term of the series vanishes but the successive terms are quite predictable and alternate sign, + - + -.

The terms of the series are summed below. Try to notice the patterns and ask how you might extend the series by one or two more terms.

| $f^{(n)}(x)$ | $f^{(n)}(0)$ | $\frac{f^{(n)}(0)}{n!} x^n$ |

|---|---|---|

| $f(x) = ln(x)$ | $f(0) = 0$ | $\require{cancel} \cancel{\frac{0}{0!} x^0}$ |

| $f'(x) = \frac{1}{x}$ | $f'(0) = 1$ | $\frac{1}{1!} x^1$ |

| $f''(x) = \frac{-1}{x}$ | $f''(0) = -1$ | $\frac{-1}{2!} x^2$ |

| $f^{(3)}(x) = \frac{2}{x^3}$ | $f^{(3)} = 2$ | $\frac{2}{3!} x^3$ |

| $f^{(4)}(x) = \frac{-6}{x^4}$ | $f^{(4)}(0) = -6$ | $\frac{-6}{4!} x^4$ |

| $f^{(5)}(x) = \frac{24}{x^5}$ | $f^{(5)}(0) = 24$ | $\frac{24}{5!} x^5$ |

$$ln(x) = \frac{1}{1!}(x - 1)^1 - \frac{1}{2!} (x - 1)^2 + \frac{2}{3!} (x - 1)^3 - \frac{6}{4!} (x - 1)^4 + \frac{24}{5!} (x - 1)^5$$

Now notice that the numerators of the fractions of each term are the factorials $0!, \; 1!, \; 2!, \dots$:

$$ln(x) = \frac{0!}{1!}(x - 1)^1 - \frac{1!}{2!} (x - 1)^2 + \frac{2!}{3!} (x - 1)^3 - \frac{3!}{4!} (x - 1)^4 + \frac{4!}{5!} (x - 1)^5$$

If we use the definition of factorials, e.g. $3! = 3 \cdot 2 \cdot 1$, it's easy to see how the factorial ratios above can be reduced, like this:

$$\frac{2!}{3!} = \frac{2\cdot 1}{3\cdot 2\cdot 1} = \frac{1}{3}$$

So we can rewrite our series in its simplest form:

$$ln(x) = \frac{(x - 1)^1}{1} - \frac{(x - 1)^2}{2} + \frac{(x - 1)^3}{3} - \frac{(x - 1)^4}{4} + \frac{(x - 1)^5}{5} - \dots$$

Finally, write the summation notation. It's easier, in this case, to start with $n = 1$ in the sum, and the $(-1)^{(n+1)}$ term provides the right alternation of sign. You'll have to do set up these summations with a fair bit of trial and error before you develop some intuition for it. Don't worry – it'll happen with some practice.

$$ln(x) \approx \sum_{n = 1}^{\infty} (-1)^{n + 1} \frac{(x - 1)^n}{n}$$

Example 4

The MacLaurin series expansion of $f(x) = \tan(x)$

The expansion is:

$$f(x) \approx \sum_{n = 0}^{\infty} \frac{f^{(n)}(0)}{n!} x^n$$

First we'll calculate the derivatives. These derivatives get more complicated with each round. Here are the first five:

$$ \begin{align} f(x) &= \tan(x) \\[5pt] f'(x) &= \bf \sec^2(x) \\[5pt] f''(x) &= 2 \sec^2(x) \tan(x) \\[5pt] f'''(x) &= 4 \sec^2(x) \tan^2 (x) + \bf 2 \sec^4(x) \\[5pt] f^{(4)}(x) &= 8 \sec^3(x) \tan^3(x) + 16 \sec^4(x) \tan^4(x) \\[5pt] f^{(5)}(x) &= 8[2 \sec^2(x) \tan^4(x) + 3 \sec^4(x) \tan^2(x) \\[5pt] &\; + 4 \sec^4(x) \tan(x) + \bf 2 \sec^6(x) \\[5pt] &\; + 4 \sec^4(x) \tan(x)] \end{align}$$

The bold terms are the only parts of these derivatives that survive setting $x=0$. All of the tangent terms vanish because $\tan(0) = 0$, whereas $\sec(0) = 1$.

The derivatives, evaluated at $x = 0$, are:

$$ \begin{matrix} f(0) = 0 && f'''(0) = 2 \\[5pt] f'(0) = 1 && f^{(4)}(0) = 0 \\[5pt] f''(0) = 0 && f^{(5)}(0) = 16 \end{matrix}$$

Now we can construct the MacLaurin series. Notice that only odd powers of $x$ are present.

Note: We could find other representations of this function, for example, by using the trig. identity $\sec^2(x) = \tan^2(x) + 1$ in the derivatives above.

$$\tan(x) \approx \frac{0x^0}{0!} + \frac{1x^1}{1!} + \frac{0x^2}{2!} + \frac{2x^3}{3!} + \frac{0x^4}{4!} + \frac{16x^5}{5!} + \dots$$

Getting rid of the zero terms gives us the series expansion. Here I've included an $x^7$ term. As far as I know, there's no convenient formula for those coefficients: 1, 2, 4, 16, 272, ..., so I'll leave them as they are.

$$\tan(x) \approx \frac{x^1}{1!} + \frac{2x^3}{3!} + \frac{4x^5}{5!} + \frac{16x^5}{5!} + \frac{272x^7}{7!} + \dots$$

Finally, let's calculate some values of $\tan(x)$ using this expansion and a calculator, and we can compare the results. In the table below, $\tan(x)$ is calculated using the first four terms of our expansion (to the $x^7$ term) and a calculator, which presumably uses a more efficient algorithm for calculating the tangent.

The expansion is very accurate up to about a unit away from $x = 0$, where it falls apart a bit. Because of the large exponents and factorials involved in this expansion, it is an inefficient algorithm for calculating tangents to high precision far from the center of the expansion.

Example 5

Two ways to get to the MacLaurin series for $f(x) = \sin^2(x)$

In this example, we'll find the MacLaurin series for $f(x) = \sin^2(x)$ by the direct method — finding derivatives, evaluating them at x = 0, and so on, and then by squaring part of the MacLaurin series for $f(x) = \sin(x)$.

Direct method

First we need some derivatives. Many will vanish when evaluated at $x = 0$, so let's go through the painstaking process of calculating a bunch of them:

$$ \begin{align} f(x) &= \sin^2(x) \\[5pt] f'(x) &= 2 \sin(x) \, \cos(x) \\[5pt] f''(x) &= 2 \cos^2(x) - 2 \sin^2(x) \\[5pt] f'''(x) &= -4 \cos(x) \, \sin(x) - 4 \sin(x) \, \cos(x) \\[5pt] &= -8 \cos(x) \, \sin(x) \\[5pt] f^{(4)}(x) &= 8 \sin^2(x) - 8 \cos^2(x) \\[5pt] f^{(5)}(x) &= 16\sin(x) \cos(x) + 16 \sin(x) \cos(x) \\[5pt] &= 32 \sin(x) \, \cos(x) \\[5pt] f^{(6)}(x) &= 32 \cos^2(x) - 32 \sin^2(x) \\[5pt] f^{(7)}(x) &= -64 \cos(x)\sin(x) - 64 \sin(x)\cos(x) \\[5pt] &= -128 \sin(x) \, \cos(x) \\[5pt] f^{(8)}(x) &= -128 \cos^2(x) + 128 \sin^2(x) \end{align}$$

Evaluating these at x = 0 gives:

$$ \begin{matrix} f(0) = 0 && f^{(5)}(0) = 0 \\[5pt] f'(0) = 0 && f^{(6)}(0) = 32 \\[5pt] f''(0) = 2 && f^{(7)}(0) = 0 \\[5pt] f'''(0) = 0 && f^{(8)}(0) = -128 \\[5pt] f^{(4)}(0) = -8 && \end{matrix}$$

Collecting the nonzero terms in our series formula gives:

$$\sin^2(x) \approx \frac{2x^2}{2!} - \frac{8x^4}{4!} + \frac{32x^6}{6!} - \frac{128x^8}{8!} + \dots$$

We can reduce the fractions by expanding the factorials to get this series:

$$\approx x^2 - \frac{x^4}{3} + \frac{2x^6}{45} - \frac{x^8}{315} + \dots$$

Now from the $f(x) = \sin(x)$ series

Now let's do this by squaring the first three terms of the MacLaurin series (in example 1 above) for $\sin(x)$:

$$\sin(x) \approx x^1 - \frac{1}{3!} x^3 + \frac{1}{5!} x^5 + \dots$$

Squaring the first three terms means squaring a trinomial:

$$\sin^2(x) \approx \left( x - \frac{1}{3!} x^3 + \frac{1}{5!} x^5 \right)^2$$

That's a little tedious, but the result, after collecting terms of like powers of $x$, is:

$$\approx x^2 - \frac{2}{3!} x^4 + \frac{2}{5!} x^6 + \frac{1}{3! 3!} x^8 + \frac{1}{5! 5!} x^{10}$$

Here again, we can reduce the numerical parts to get a pretty good approximation of our series. The last two terms don't match the one we got from the direct method, but that's because we only squared the first three terms of the sine series.

Still, this method represents a powerful feature of series, namely that we can do many operations with them, some that would be more difficult with the original function.

Practice problems

-

Find the first four nonzero terms of the MacLaurin series for $f(x) = \sin(x^2)$

Solution

This isn't as difficult as it may at first seem, because we already know the MacLaurin series for \sin(x):

$$\sin(x) \approx x - \frac{x^3}{3!} + \frac{x^5}{5!} - \frac{x^7}{7!} + \dots$$

To find the expansion for $\sin(x^2),$ simply plug in x2 for x in the sine series:

$$\sin(x) \approx x^2 - \frac{x^6}{3!} + \frac{x^{10}}{5!} - \frac{x^{14}}{7!} + \dots$$

As long as we can tolerate whatever error is introduced by using a series instead of the function it represents (and that error can usually be made to be as small as we need), series can help us to do a lot of tasks more easily.

-

Find the first two nonzero terms of the MacLaurin series for $f(x) = \tan^{-1}(x)$.

Solution

First we need some derivatives. Unfortunately, these are lousy ones. There are the first three derivatives of $tan^{-1}(x):$

$$ \begin{align} f(x) &= tan^-1(x) \\[5pt] f'(x) &= \frac{1}{1 + x^2} \\[5pt] f''(x) &= -(1 + x^2)^{-2} (2x) = \frac{-2x}{(1 + x^2)^2} \\[5pt] f'''(x) &= \frac{-2(1 + x^2)^2 + 2x(2)(1 + x^2)(2x)}{(1 + x^2)^3} \\[5pt] &= - \frac{2}{1 + x^2} + \frac{8x^2}{(1 + x^2)^2} \end{align}$$

Now evaluate these derivatives at x = 0 (MacLaurin series):

$$ \begin{matrix} f(0) = 0 && f''(0) = 0 \\[5pt] f'(0) = 1 && f'''(0) = -2 \end{matrix}$$

I like to plug everything into the MacLaurin series formula as it is, just to keep things organized:

$$tan^{-1}(x) = \frac{0x^0}{0!} + \frac{1x^1}{1!} + \frac{0x^2}{2!} - \frac{2x^3}{3!} + \dots$$

Then simplify:

$$\tan^{-1}(x) \approx x - \frac{2x^3}{3!}$$

-

The indefinite integral of $f(x) = \cos(x^3)$ is tricky to do. Use the first four nonzero terms of the MacLaurin series of $\cos(x)$ to estimate the integral.

Solution

We already know the MacLaurin series for \cos(x):

$$\cos(x) \approx 1 - \frac{x^2}{2!} + \frac{x^4}{4!} - \frac{x^6}{6!} + \dots $$

We can approximate $f(x) = \cos(x^3)$ by inserting an $x^3$ for every x in that expansion, like this:

$$\cos(x^3) \approx 1 - \frac{x^6}{2!} + \frac{x^{12}}{4!} - \frac{x^{18}}{6!} + \dots $$

Now the integral of a sum is the sum of integrals, so we simply integrate this polynomial function term-by-term:

$$\int \cos(x^3) \, dx = x - \frac{x^7}{7 \cdot 2!} + \frac{x^{13}}{13 \cdot 4!} - \frac{x^{19}}{19 \cdot 6!} + \dots$$

Notice that it wouldn't be difficult at all to add more terms to this series – or even predict the pattern to do so. With series, we're able to approximate, with more-or-less arbitrary precision, a difficult integral.

-

Find the MacLaurin series for $\int_0^x \cos(t^3) \, dt$.

Solution

It might be tempting to start taking derivatives of this integral, recalling that the fundamental theorem of calculus gives us the first derivatives basically for free, but that gets cumbersome quickly.

We know that the series for $\cos(x)$ is:

$$\cos(x) \approx 1 - \frac{t^2}{2!} + \frac{t^4}{4!} - \frac{t^6}{6!} + \dots$$

If we substitute x3 for x in the series, we get

$$\cos(t^3) \approx 1 - \frac{t^6}{2!} + \frac{t^{12}}{4!} - \frac{t^{18}}{6!} + \dots$$

Now the integral of a sum is the sum of integrals, so we simply integrate this polynomial seris term-by-term:

$$\int \cos(t^3) \, dt = t - \frac{t^7}{7\cdot 2!} + \frac{t^{13}}{13\cdot 4!} - \frac{t^{19}}{19\cdot 6!} + \dots$$

Evaluating at t = 0 (the lower limit of integration) gives all zeros, so the integral is just

$$\int \cos(x^3) \, dx = t - \frac{x^7}{7\cdot 2!} + \frac{x^{13}}{13\cdot 4!} - \frac{x^{19}}{19\cdot 6!} + \dots$$

We can write the series in summation notation like this:

$$\int \cos(x^3)\, dx = \sum_{n = 0}^{\infty} \frac{(-1)^n x^{6n+1}}{(6n + 1)(2n)!}$$

-

Find the MacLaurin series for $f(x) = \frac{1}{1 + x^2}$ and express it with summation notation.

Solution

First we find some derivatives:

$$ \begin{align} f(x) &= \frac{1}{1 + x^2} \\[5pt] f'(x) &= \frac{-2x}{(1 + x^2)^2} \\[5pt] f''(x) &= - \frac{2}{(1 + x^2)^2} + \frac{8x^2}{(1 + x^2)^3} \\[5pt] f^{(3)}(x) &= \frac{24x}{(1 + x^2)^3} - \frac{48x^3}{(1 + x^2)^4} \\[5pt] f^{(4)}(x) &= \frac{24}{(1 + x^2)^3} - \frac{288x^2}{(1 + x^2)^4} + \frac{384x^3}{(1 + x^2)^5} \end{align}$$

Now evaluate each of those at x = 0:

$$ \begin{matrix} f(0) = 1 && f^{(3)} = 0 \\[5pt] f'(0) = 0 && f^{(4)} = 24 \\[5pt] f''(0) = -2 \end{matrix}$$

Next plug those values into the MacLauring series formula:

$$f(x) \approx \frac{1x^0}{0!} + \frac{0x^1}{1!} - \frac{2x^2}{2!} + \frac{0x^3}{3!} + \frac{24x^4}{4!} + \dots$$

So the series is

$$f(x) \approx 1 - x^2 + x^4 - x^6 + \dots$$

In summation notation:

$$= \sum_{n = 0}^{\infty} (-1)^n \, x^{2n}$$

-

Find the 3rd-degree Taylor series approximation of the function $f(x) = x^{1/2}$ centered around $x = 2$.

Solution

First we find the derivatives and evaluate them at x = 2:

$$ \begin{matrix} f(x) = x^{\frac{1}{2}} && f(2) = \sqrt{2} \\[5pt] f'(x) = \frac{1}{2}x^{-\frac{1}{2}} && f'(2) = \frac{1}{2 \sqrt{2}} \\[5pt] f''(x) = -\frac{1}{4} x^{-\frac{3}{2}} && f''(2) = \frac{1}{4 \sqrt{2^3}} \\[5pt] f^{(3)}(x) = \frac{3}{8} x^{-\frac{5}{2}} && f^{(3)}(2) = \frac{3}{8 \sqrt{2^5}} \\[5pt] f^{(4)}(x) = -\frac{15}{16} x^{-\frac{7}{2}} && f^{(4)}(2) = -\frac{15}{16 \sqrt{2^7}} \end{matrix}$$

Next, plug those into the Taylor series formula:

( ← wide equations: scroll left & right → ) $$f(x) \approx \frac{(x - 2)^0 \cdot \sqrt{2}}{1! \cdot 2 \sqrt{2}} + \frac{(x - 2)^1}{2! \cdot 4 \sqrt{2^3}} + \frac{(x - 2)^2}{2! \cdot 4 \sqrt{2^3}} + \frac{(x - 2)^3}{3! \cdot 8 \sqrt{2^5}} + \dots$$

and simplify as much as possible:

$$f(x) \approx \sqrt{2} + \frac{x - 2}{2 \sqrt{2}} + \frac{(x - 2)^2}{8 \sqrt{2^3}} + \frac{(x - 2)^3}{48 \sqrt{2^5}}$$

![]()

xaktly.com by Dr. Jeff Cruzan is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 3.0 Unported License. © 2012-2025, Jeff Cruzan. All text and images on this website not specifically attributed to another source were created by me and I reserve all rights as to their use. Any opinions expressed on this website are entirely mine, and do not necessarily reflect the views of any of my employers. Please feel free to send any questions or comments to jeff.cruzan@verizon.net.