Doing things with matrices

In this section we'll learn how to add (and subtract) and multiply matrices and vectors. If you aren't sure about terminology, head back to the matrix definitions page to review it.

Matrices and vectors are related: We define matrices by the number of rows and columns they contain, in that order. Rows are horizontal, columns are vertical.

A vector is just a one-dimensional matrix. It can be written either as a row vector or a column vector; the choice is based on convenience.

We very often need to add or subtract matrices and vectors (mostly vectors), and even more often in linear algebra we multiply them.

Adding matrices and vectors

We can add (or subtract) two matrices or two vectors, as long as the dimensions of both are the same. We can add an $m \times n$ matrix to another $m \times n$ matrix, but not to a matrix with either dimension different.

First we'll look at vector addition using 3D vectors:

$$\left(\begin{array}{r} a_1 \\ a_2 \\ a_3 \end{array} \right) + \left(\begin{array}{r} b_1 \\ b_2 \\ b_3 \end{array} \right) = \left(\begin{array}{r} a_1 + b_1 \\ a_2 + b_2 \\ a_3 + b_3 \end{array} \right)$$

Here is an example. It could'nt be simpler, and hopefully you can see why adding vectors of different dimensions just won't work.

$$\left(\begin{array}{r} 2 \\ 4 \\ -1 \end{array} \right) + \left(\begin{array}{r} 2 \\ 4 \\ 1 \end{array} \right) = \left(\begin{array}{r} 4 \\ 8 \\ 0 \end{array} \right)$$

Now we can simply extend this idea to addition or subtraction of matrices:

$$\left(\begin{array}{rrr} a_{11} & a_{12} & a_{13} \\ a_{21} & a_{22} & a_{23} \\ a_{31} & a_{32} & a_{33} \end{array} \right) + \left(\begin{array}{rrr} b_{11} & b_{12} & b_{13} \\ b_{21} & b_{22} & b_{23} \\ b_{31} & b_{32} & b_{33} \end{array} \right) = \left(\begin{array}{rrr} a_{11}+b_{11} & a_{12}+b_{12} & a_{13}+b_{13} \\ a_{21}+b_{21} & a_{22}+b_{22} & a_{23}+b_{23} \\ a_{31}+b_{31} & a_{32}+b_{32} & a_{33}+b_{33} \end{array} \right)$$

Here's a specific example:

$$\left(\begin{array}{rrr} 1 & 2 & -1 \\ -2 & -3 & 4 \\ 5 & 5 & 0 \end{array} \right) + \left(\begin{array}{rrr} 1 & 2 & 1 \\ -1 & 4 & -2 \\ -2 & -3 & 1 \end{array} \right) = \left(\begin{array}{rrr} 2 & 4 & 0 \\ -3 & 1 & 2 \\ 3 & 2 & 1 \end{array} \right)$$

As usual, subtraction is just addition of the negative, so if you know how to add matrices and vectors, you already know how to subtract them.

For matrices or vectors to be added, they must have the same dimensions.

Matrices and vectors are added or subtraced element by corresponding element.

Remember that a vector is the location of a point in some $n$-dimensional space. I'll pause again here just to say that an "$n$-dimensional space" can be confusing at first. But in fact, a system can have just about as many dimensions as you can think of. You just might have to tweak what you mean by "dimension."

When we multiply two vectors, the result is a scalar. A scalar is just a number. You can think of a scalar as a number with no location or direction (like a temperature or a speed), and a vector as a number with a location or direction (like a force or a velocity).

When two $n$-dimensional vectors are multiplied, we multiply the first element of the first vector by the first element of the second, add that to the product of the second elements, then the product of the third elements, and so on, up to $n$ products. This kind of product is referred to as a dot product or a scalar product. Here's what it looks like:

Let $\vec a = (a_1, a_2, a_3 \dots , a_n)$ and

$\phantom{000} \vec b = (b_1, b_2, b_3 \dots , b_n)$.

Then

$$\vec a \cdot \vec b = a_1 b_1 + a_2 b_2 + \dots + a_n b_n$$

Here's an example of the dot product of two three-dimensional vectors. If

$$ \begin{align} \vec a = (1, 4, -2) \\[5pt] \vec b = (-2, 1, 7), \end{align}$$

Then

$$ \begin{align} \vec a \cdot \vec b &= 1(-2) + 4(1) + (-2)7 \\[5pt] &= -2 + 4 - 14 = -12 \end{align}$$

The dot product is fully discussed in another section, but it's worth pointing out its geometric determination. The dot product of two vectors is related to the angle between them by

$$\vec a \cdot \vec b = |\vec a||\vec b| cos(\theta),$$

where $\theta$ is the angle between the vectors and $|\vec a|$ represents the length of vector $\vec a$.

Multiplying a vector by a square matrix

Seeing the matrix as an "operator"

We frequently need to multiply a vector by a matrix. This always means that the matrix is on the left and the vector (written as a column vector) is on the right.

It might help to begin thinking of a matrix in this situation as an "operator," something that alters a vector in a certain way, but is unchanged itself.

This isn't only a good way to think about matrix-vector multiplication, but a handy way to think of a number of concepts you'll encounter later on in math.

Here's a first example. We'll multiply the 3×1 vector $\vec b$ by the 3×3 matrix $A$ to make a new 3×1 vector. You'll have to follow all of the subscripts as we multiply matrix $A$ by vector $\vec b$:

$$A = \left(\begin{array}{rrr} a_{11} & a_{12} & a_{13} \\ a_{21} & a_{22} & a_{23} \\ a_{31} & a_{32} & a_{33} \end{array} \right) \phantom{000} \vec b = \left( \begin{array}{r} x_1 \\ x_2 \\ x_3 \end{array} \right) \phantom{000} A \vec b = \left( \begin{array}{r} {a_{11}x_1 + a_{12}x_2 + a_{13}x_3} \\ {a_{21}x_1 + a_{22}x_2 + a_{23}x_3} \\ {a_{31}x_1 + a_{32}x_2 + a_{33}x_3} \end{array} \right)$$

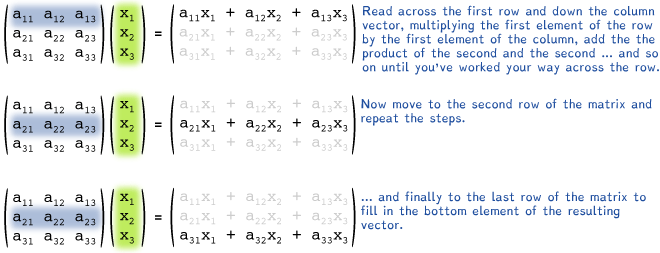

The step-by-step details of how that multiplication was performed are illustrated below, but notice that it's really like taking the dot product of the $m^{th} row of the matrix and the vector to get the $m^{th}$ element of the vector.

Each row of the matrix $A$ is multiplied, one element at a time, by the corresponding element of the column vector, and the resulting products are added to give the corresponding row element of the new vector.

(Notice that the result of multiplying a 3×3 matrix with a 3×1 vector is a 3×1 vector.)

Once the first row of the new vector is determined, we move to the second and third rows of the matrix, in turn, to find the second and third elements of the new vector. Notice that each new element of the new 3×1 vector is just the dot product of the appropriate row of the matrix with the column vector. See if you can follow it:

Example 1

Here is a real example of multiplication of a column vector by a square matrix. It helps to know before you begin that the product of a 3×3 matrix and a 3×1 vector will be another 3×1 vector. It can be useful to think of the matrix is something that operates on a vector to change it into some other vector.

We find the product by building the new vector element-by-element (row-by-row). See if you can follow the steps below to get to the resulting vector, $(6, 13, 11)$.

Multiplying matrices

Multiplying two matrices is just a simple extension of multiplying vectors by matrices if we think of the second matrix as a row of column vectors. The mnth element of the new matrix is the dot product of the mth row of the first matrix

and the nth column of the second, and we simply do this for every combination of rows on the left and columns on the right. Here's what it looks like for a 3×3 matrix multiplied by a 3×2 matrix:

$$A = \left(\begin{array}{rrr} a_{11} & a_{12} & a_{13} \\ a_{21} & a_{22} & a_{23} \\ a_{31} & a_{32} & a_{33} \end{array} \right) \left(\begin{array}{rr} b_{11} & b_{12} \\ b_{21} & b_{22} \\ b_{31} & b_{32} \end{array} \right) = \left( \begin{array}{rr} a_{11}b_{11} + a_{12}b_{21} + a_{13}b_{31} & a_{11}b_{12} + a_{12}b_{22} + a_{13}b_{32} \\ a_{21}b_{11} + a_{22}b_{21} + a_{23}b_{31} & a_{21}b_{12} + a_{22}b_{22} + a_{23}b_{32} \\ a_{31}b_{11} + a_{32}b_{21} + a_{33}b_{31} & a_{31}b_{12} + a_{32}b_{22} + a_{33}b_{32} \end{array} \right)$$

The dimensions of multiplied matrices must match

We can't multiply a 3×3 matrix by a 2×3 matrix because as we take what amounts to the dot product of the first row of the 3×3 and the first column of the 2×3, we run out of elements to multiply in the column vector. Here's what that might look like:

Now if we try to form the first (upper left) element of the product matrix, we find a mismatch in the number of elements:

So the dimensions must match. Here's an, easy-to-remember rule:

We can multiply a 4×4 matrix by any matrix or vector with 4 rows, or more generally, we can multiply an m × n matrix by an m×p matrix, where p can be any integer. All that matters is that the number of columns on the left match the number of rows on the right. The way that this rule is written above might help you remember it, but in any case, if you try to do the wrong thing, you'll notice it because at some point you'll run out of numbers.

Example: (3×3)·(3×2) → (3×2)

$$\left( \begin{array}{rrr} 1 & 2 & 3 \\ -1 & 1 & 2 \\ 2 & -3 & 4 \end{array} \right) \left( \begin{array}{rr} 2& 1 \\ -1 & 2 \\ 3 & 3 \end{array} \right) = \left( \begin{array}{rr} 1 \cdot 2 + 2 \cdot 2 + 3 \cdot 3 & 1 \cdot 1 + 2 \cdot 2 + 3 \cdot 3 \\ -1\cdot 2 + 1 \cdot (-1) + 2 \cdot 3 & -1 \cdot 1 + 1 \cdot 2 + 2 \cdot 3 \\ 2 \cdot 2 + (-3)(-1) + 4 \cdot 3 & 2 \cdot 1 + (-3)2 + 4 \cdot 3 \end{array} \right) = \left( \begin{array}{rr} 15 & 14 \\ 3 & 7 \\ 19 & 8 \end{array} \right)$$

Practice problems

Consider these vectors and matrices:

$$A = \left( \begin{array}{rrr} -1 & 0 & 1 \\ -1 & -1 & 3 \\ 2 & -2 & 2 \end{array} \right) \phantom{000} B = \left( \begin{array}{rr} 4 & 1 \\ -3 & 2 \\ 1 & -3 \end{array} \right)$$

$$\vec b = (2, 3, 4, 1) \phantom{000} \vec c = \left( \begin{array}{r} 2 \\ 7 \end{array} \right)$$

$$C = \left( \begin{array}{rrrr} -1 & 1 & -1 & 2 \\ -4 & -1 & 3 & -3 \\ 2 & -3 & 1 & 4 \\ 3 & -1 & -2 & 3 \end{array} \right) \phantom{000} \vec a = \left( \begin{array}{r} -1 \\ 3 \\ -2 \\ 1 \end{array} \right)$$

$$D = \left( \begin{array}{rrrr} 1 & 0 & 0 & 0 \\ 0 & 1 & 0 & 0 \\ 0 & 0 & 1 & 0 \\ 0 & 0 & 0 & 1 \end{array} \right) \phantom{000} E = \left( \begin{array}{rrrr} 1 & 1 & 0 & 0 \\ 1 & 1 & 0 & 0 \\ 0 & 0 & 1 & 1 \\ 0 & 0 & 1 & 1 \end{array} \right)$$

Find the following sums, differences and products:

| 1. | ||

| 2. | ||

| 3. | ||

| 4. | ||

| 5. |

| 6. | ||

| 7. | ||

| 8. | ||

| 9. | ||

| 10. |

![]()

xaktly.com by Dr. Jeff Cruzan is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 3.0 Unported License. © 2016-2025, Jeff Cruzan. All text and images on this website not specifically attributed to another source were created by me and I reserve all rights as to their use. Any opinions expressed on this website are entirely mine, and do not necessarily reflect the views of any of my employers. Please feel free to send any questions or comments to jeff.cruzan@verizon.net.